Shadow AI: The Fastest-Growing Security Risk No One Is Tracking

AI adoption isn’t happening through formal rollout plans. It’s happening quietly…across endpoints, workflows, and teams.

Employees are installing AI tools. Developers are integrating copilots into daily workflows. Autonomous agents are being deployed to automate tasks. And in most organizations, this activity is happening without centralized visibility, governance, or control.

This is Shadow AI. And it’s quickly becoming one of the largest unmanaged risks in the enterprise. Most security teams are focused on external threats, but a growing portion of risk is now originating from inside the environment itself, through tools and systems they don’t even know exist.

What Is Shadow AI?

Shadow AI refers to the use of artificial intelligence tools, agents, and systems within an organization without formal approval, oversight, or security governance.

It includes:

- Employees using generative AI tools like ChatGPT or coding assistants without policy guidance

- AI plugins, extensions, or local models installed on endpoints

- Autonomous agents executing workflows or scripts across systems

- AI tools accessing internal data sources, APIs, or repositories

In short, Shadow AI is AI adoption happening faster than security can track it. And according to Morphisec’s research, most organizations have little to no visibility into how AI is actually being used across their environments.

Why Shadow AI Is Growing So Fast

Shadow AI isn’t a user behavior problem. It’s a structural one.

AI tools are:

- Easy to access

- Instantly valuable

- Often free or low cost

- Designed for rapid integration into workflows

At the same time, organizations are under pressure to:

- Increase productivity

- Accelerate development

- Embrace AI-driven innovation

The result?

AI adoption is happening at the edge, without waiting for IT or security approval. This creates a growing gap between what the business is using and what security can see and control.

The Hidden Risks of Shadow AI

At first glance, Shadow AI looks like a productivity win. But underneath, it introduces a new layer of risk that most organizations are not equipped to manage.

1. Data Exposure and Leakage

Many AI tools interact directly with sensitive data, including source code, internal documents, and customer and financial data.

When these tools connect to external APIs or cloud-based models, that data may be transmitted outside the organization’s control. Without visibility or policy enforcement, organizations have no way to:

- Track what data is being accessed

- Control where it’s being sent

- Prevent unintended exposure

2. Zero Visibility into AI Behavior

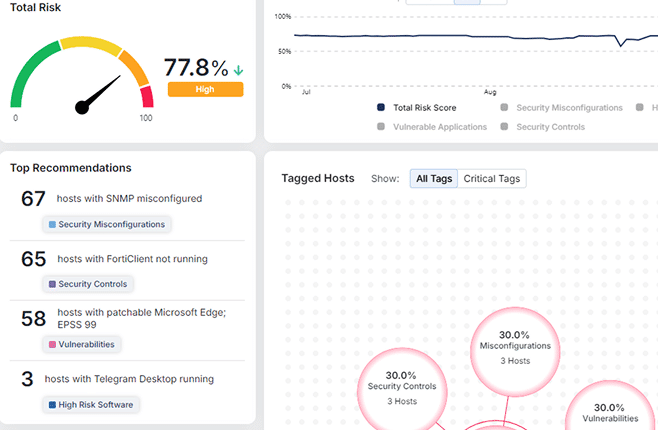

You can’t secure what you can’t see. Most organizations today cannot:

- Identify all AI tools running across endpoints

- Distinguish between approved and unapproved usage

- Monitor how AI systems behave in real time

This creates a fragmented (and often nonexistent) view of the AI attack surface. As highlighted in the AI Security Gap whitepaper, organizations are effectively operating blind when it comes to AI activity across their environments.

3. Unauthorized Actions and Automation Risk

AI systems don’t just generate content. They take action. Autonomous agents and AI-driven workflows can:

- Execute scripts

- Modify files

- Trigger processes

- Interact with multiple systems simultaneously

Without runtime control, these actions may:

- Exceed intended permissions

- Execute at scale

- Introduce unintended consequences

4. Privilege Escalation and Access Misuse

AI tools often operate with the same permissions as the user…or more.

This creates risk when:

- AI systems access sensitive directories

- Agents execute actions beyond their intended scope

- Permissions are inherited across systems

The result is a potential pathway for:

- Privilege escalation

- Unauthorized system changes

- Expanded attack surfaces

5. AI as an Attack Entry Point

Shadow AI doesn’t just introduce internal risk. It can become an external attack vector.

Threat actors can exploit:

- Compromised AI plugins or extensions

- Malicious or tampered models

- Supply chain vulnerabilities in AI ecosystems

Because these tools often operate within trusted environments, they may bypass traditional security controls entirely.

Why Traditional Security Tools Miss Shadow AI

Most enterprise security tools were not designed to secure AI. That’s because they rely on known threat signatures, behavioral anomalies, and network visibility. Shadow AI doesn’t fit neatly into any of these categories.

AI activity often:

- Occurs locally at the endpoint

- Operates within legitimate applications

- Uses encrypted APIs and trusted connections

- Generates behavior that appears normal

In other words, it looks legitimate. And that’s exactly why it’s so difficult to detect.

As outlined in the whitepaper, modern threats (and AI-driven activity in particular) are increasingly indistinguishable from normal operations, making detection-based approaches less effective.

Shadow AI Is the Front Door to the AI Security Gap

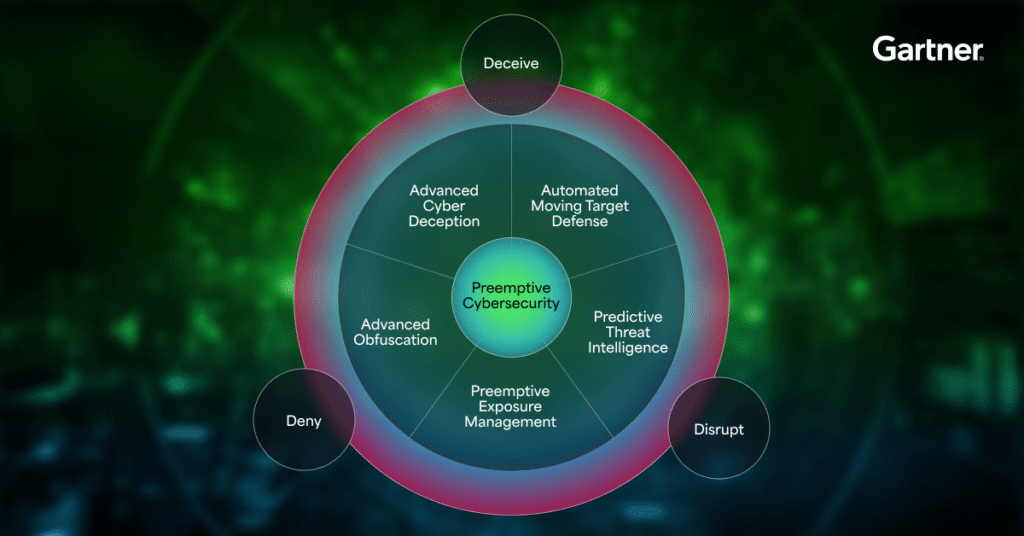

Shadow AI is not just a standalone risk. It’s a core driver of a much larger problem: the AI Security Gap.

This gap is defined by three critical failures:

- Lack of visibility into AI tools and behavior

- Lack of control over how AI operates

- Lack of prevention at the point of execution

Shadow AI sits at the intersection of all three. It expands the attack surface, introduces unmanaged behavior, and creates conditions where threats (whether internal misuse or external exploitation) can execute without resistance.

What Needs to Change

Addressing Shadow AI requires more than visibility. It requires control.

Organizations must shift from:

- Monitoring AI usage → controlling AI behavior

- Observing activity → enforcing policy at runtime

- Detecting threats → preventing execution

In an AI-driven environment, security must operate at the same speed and scale as the systems it is protecting. That means moving closer to the endpoint (where AI processes execute) and ensuring that:

- AI activity is continuously visible

- Behavior is monitored in real time

- Unauthorized actions are stopped before they occur

How to Start Addressing Shadow AI Today

Security leaders don’t need to solve everything at once, but they do need to start. Here are five practical steps:

- Build an AI Inventory — Identify what AI tools, agents, and integrations are in use across the organization.

- Define AI Usage Policies — Establish clear guidelines for how AI tools can access data, systems, and workflows.

- Monitor AI Behavior at Runtime — Move beyond static visibility to understand how AI operates in real time.

- Enforce Control at the Endpoint — Ensure AI actions can be governed and stopped at the point of execution.

- Reduce Reliance on Detection Alone — Prioritize prevention-first strategies that stop threats before they execute.

The Risk You Can’t See Is the One That Wins

Shadow AI is not a future problem. It’s already embedded in your environment, expanding your attack surface, interacting with sensitive data, and operating beyond the reach of traditional security tools.

The question isn’t whether AI is being used inside your organization. It’s whether you have any control over it. Shadow AI is the starting point of a much larger shift in cybersecurity, one that demands a new approach to visibility, control, and prevention.

Download The AI Security Gap: Why Detection Fails in the Age of Autonomous Threats white paper to learn how to close gaps in your security architecture and build a prevention-first strategy for the AI era.

Stay up-to-date

Get the latest resources, news, and threat research delivered to your inbox.